🌎 English | Chinese

👉 For Visual Studio 2022: here

👉 For Visual Studio 2019: here

This is an extension that adds chatGPT functionality directly within Visual Studio.

You will be able to consult the chatGPT directly through the text editor or through a new specifics tool windows.

Watch here some examples:

The newly introduced Copilot functionality enhances your coding experience by providing intelligent code suggestions as you type.

When you start writing code, simply press the Enter key to receive contextual suggestions that can help you complete your code more efficiently. Confirm the suggestion pressing the TAB key.

You can disable the Copilot Functionality through the options if you desire.

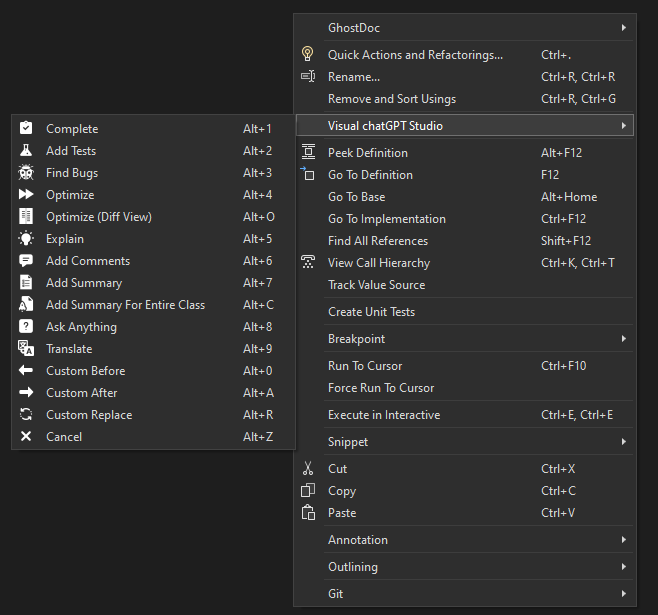

Select a method and right click on text editor and you see these new chatGPT commands:

- Complete: Start write a method, select it and ask for complete.

- Add Tests: Create unit tests for the selected method.

- Find Bugs: Find bugs for the selected code.

- Optimize: Optimize the selected code.

- Optimize (Diff View): Optimize the selected code, however, instead of the result being written in the code editor, a new window will open where you can compare the original code with the version optimized by chatGPT.

- Explain: Write an explanation of the selected code.

- Add Comments: Add comments for the selected code.

- Add Summary: Add Summary for C# methods.

- Add Summary For Entire Class: Add Summary for entire C# class (for methods, properties, enums, interfaces, classes, etc). Don't need to select the code, only run the command to start the process.

- Ask Anything: Write a question on the code editor and wait for an answer.

- Translate: Replace selected text with the translated version. In Options window edit the command if you want translate to another language instead English.

- Custom Before: Create a custom command through the options that inserts the response before the selected code.

- Custom After: Create a custom command through the options that inserts the response after the selected code.

- Custom Replace: Create a custom command through the options that replace the selected text with the response.

- Cancel: Cancel receiving/waiting any command requests.

And if you desire that the responses be written on tool window instead on the code editor, press and hold the SHIFT key and select the command (not work with the shortcuts).

The pre-defined commands can be edited at will to meet your needs and the needs of the project you are currently working on.

It is also possible to define specific commands per Solution or Project. If you are not working on a project that has specific commands for it, the default commands will be used.

Some examples that you can do:

- Define specific framework or language: For example, you can have specific commands to create unit tests using MSTests for a project and XUnit for another one.

- Work in another language: For example, if you work in projects that use another language different that you mother language, you can set commands for your language and commands to another language for another projects.

- ETC.

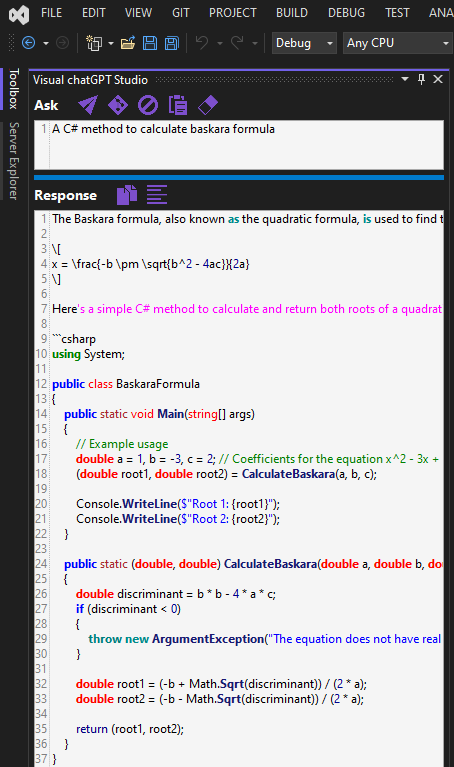

In this tool window you can ask questions to chatGPT and receive answers directly in it.

This window can also be used to redirect the responses of commands executed in the code editor to it, holding the SHIFT key while executing a command, this way you can avoid editing the code when you don't want to, or when you want to validate the result before inserting it in the project.

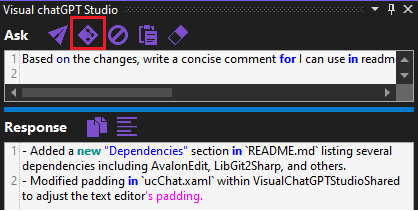

In this window it will also be possible to create a git push comments based on pending changes by clicking on this button:

No more wasting time thinking about what you are going to write for your changes!

Through the Generate Git Changes Comment Command option of this extension, you can edit the request command. Ideal if you want comments to be created in a language other than English, and/or if you want the comment to follow some other specific format, etc.

You will find this window in menu View -> Other Windows -> Visual chatGPT Studio.

In this window editor you can interact directly with chatGPT as if you were in the chatGPT portal itself:

Unlike the previous window, in this one the AI "remembers" the entire conversation:

You can also interact with the opened code editor through the Send Code button. Using this button the OpenAI API becomes aware of all the code in the opened editor, and you can request interactions directly to your code, for example:

- Ask to add new method on specific line, or between two existing methods;

- Change a existing method to add a new parameter;

- Ask if the class has any bugs;

- Etc.

But pay attention. Because that will send the entire code from opened file to API, this can increase the tokens consume. And also will can reach the token limit per request sooner depending the model you are using. Set a model with large tokens limit can solve this limitation.

By executing this command, you can also hold down the SHIFT key when press the Send Code button so that the code will be write directly in the chat window instead of the code editor, in case you want to preserve the original code and/or analyze the response before applying it to the opened code editor.

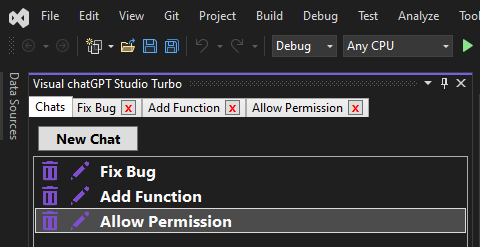

You will also be able to keep multiple chats open at the same time in different tabs. And each chat is kept in history, allowing you to continue the conversation even if Visual Studio is closed:

You will find this window in menu View -> Other Windows -> Visual chatGPT Studio Turbo.

Watch here some examples using the Turbo Chat:

Here you can add project items to the context of requests to OpenAI. Ideal for making requests that require knowledge of other points of the project.

For example, you can request the creation of a method in the current document that consumes another method from another class, which was selected through this window.

You can also ask to create unit tests in the open document of a method from another class referenced through the context.

You can also request an analysis that involves a larger context involving several classes. The possibilities are many.

But pay attention. Depending on the amount of code you add to the context, this can increase the tokens consume. And also will can reach the token limit per request sooner depending the model you are using. Set a model with large tokens limit can solve this limitation.

You will find this window in menu View -> Other Windows -> Visual chatGPT Studio Solution Context.

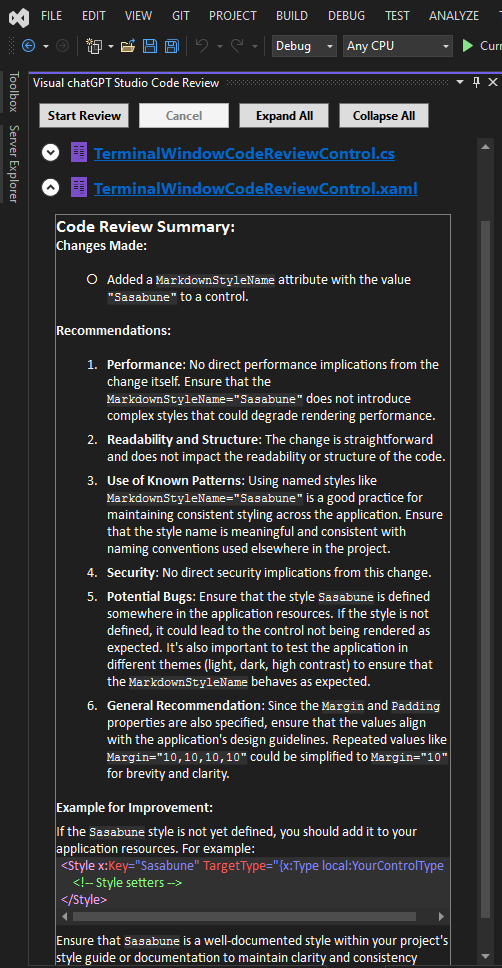

The Code Review Tool Window feature is designed to enhance the development workflow by automatically generating code reviews based on Git Changes in a project. This innovative functionality aims to identify potential gaps and areas for improvement before a pull request is initiated, ensuring higher code quality and facilitating a smoother review process.

-

Git Changes Detection: The feature automatically detects any changes made to the codebase in Git.

-

Automatic Review Generation: Upon detecting changes, the system instantly analyzes the modifications using the power of AI. It evaluates the code for potential issues such as syntax errors, code smells, security vulnerabilities, and performance bottlenecks.

-

Feedback Provision: The results of the analysis are then compiled into a comprehensive code review report. This report includes detailed feedback on each identified issue, suggestions for improvements, and best practice recommendations.

-

Integration with Development Tools: The feature seamlessly integrates with the Visual Studio, ensuring that the code review process is a natural part of the development workflow.

-

Edit the command: Through the extension options, it is possible to edit the command that requests the Code Review for customization purposes.

- Early Detection of Issues: By identifying potential problems early in the development cycle, developers can address issues before they escalate, saving time and effort.

- Improved Code Quality: The automatic reviews encourage adherence to coding standards and best practices, leading to cleaner, more maintainable code.

- Streamlined Review Process: The feature complements the manual code review process, making it more efficient and focused by allowing reviewers to concentrate on more complex and critical aspects of the code.

- Enhanced Collaboration: It fosters a culture of continuous improvement and learning among the development team, as the automated feedback provides valuable insights and learning opportunities.

If you find Visual chatGPT Studio helpful, you might also be interested in my other extension, Backlog chatGPT Assistant. This powerful tool leverages AI to create and manage backlog items on Azure Devops directly within Visual Studio. Whether you're working with pre-selected work items, user instructions, or content from DOCX and PDF files, this extension simplifies and accelerates the process of generating new backlog items.

To use this tool it is necessary to connect through the OpenAI API, Azure OpenAI, or any other API that is OpenAI API compatible.

1 - Create an account on OpenAI: https://platform.openai.com

2 - Generate a new key: https://platform.openai.com/api-keys

3 - Copy and past the key on options and set the OpenAI Service parameter as OpenAI:

1 - First, you need have access to Azure OpenAI Service. You can see more details here.

2 - Create an Azure OpenAI resource, and set the resource name on options. Example:

3 - Copy and past the key on options and set the OpenAI Service parameter as AzureOpenAI:

4 - Create a new deployment through Azure OpenAI Studio, and set the name:

5 - Set the Azure OpenAI API version. You can check the available versions here.

In addition to API Key authentication, you can now authenticate to Azure OpenAI using Microsoft Entra ID. To enable this option:

1 - Ensure your Azure OpenAI deployment is registered in Entra ID, and the user has access permissions.

2 - In the extension settings, set the parameter Entra ID Authentication to true.

3 - Define the Application Id and Tenant Id for your application in the settings.

4 - The first time you run any command, you will be prompted to log in using your Microsoft account.

5 - For more details on setting up Entra ID authentication, refer to the documentation here.

Is possible to use a service that is not the OpenAI or Azure API, as long as this service is OpenAI API compatible.

This way, you can use APIs that run locally, such as Meta's llama, or any other private deployment (locally or not).

To do this, simply insert the address of these deployments in the Base API URL parameter of the extension.

It's worth mentioning that I haven't tested this possibility for myself, so it's a matter of trial and error, but I've already received feedback from people who have successfully doing this.

- Issue 1: Occasional delays in AI response times.

- Issue 2: AI can hallucinate in its responses, generating invalid content.

- Issue 3: If the request sent is too long and/or the generated response is too long, the API may cut the response or even not respond at all.

- Workaround: Retry the request changing the model parameters and/or the command.

-

As this extension depends on the API provided by OpenAI or Azure, there may be some change by them that affects the operation of this extension without prior notice.

-

As this extension depends on the API provided by OpenAI or Azure, there may be generated responses that not be what the expected.

-

The speed and availability of responses directly depend on the API.

-

If you are using OpenAI service instead Azure and receive a message like

429 - You exceeded your current quota, please check your plan and billing details., check OpenAI Usage page and see if you still have quota, example:

You can check your quota here: https://platform.openai.com/account/usage

- If you find any bugs or unexpected behavior, please leave a comment so I can provide a fix.

☕️ If you find this extension useful and want to support its development, consider buying me a coffee. Your support is greatly appreciated!

- AvalonEdit

- LibGit2Sharp

- OpenAI-API-dotnet

- sqlite-net-pcl

- MdXaml

- VsixLogger

- Community.VisualStudio.Toolkit.17

- Added the possibility to use your Microsoft Account to authenticate to the Azure Open AI Service through Entra ID.

- Bug fixes and adjusts.