Deep Perceptual Audio Metric (DPAM) [Paper] [Webpage] [Teaser] [Full Video]

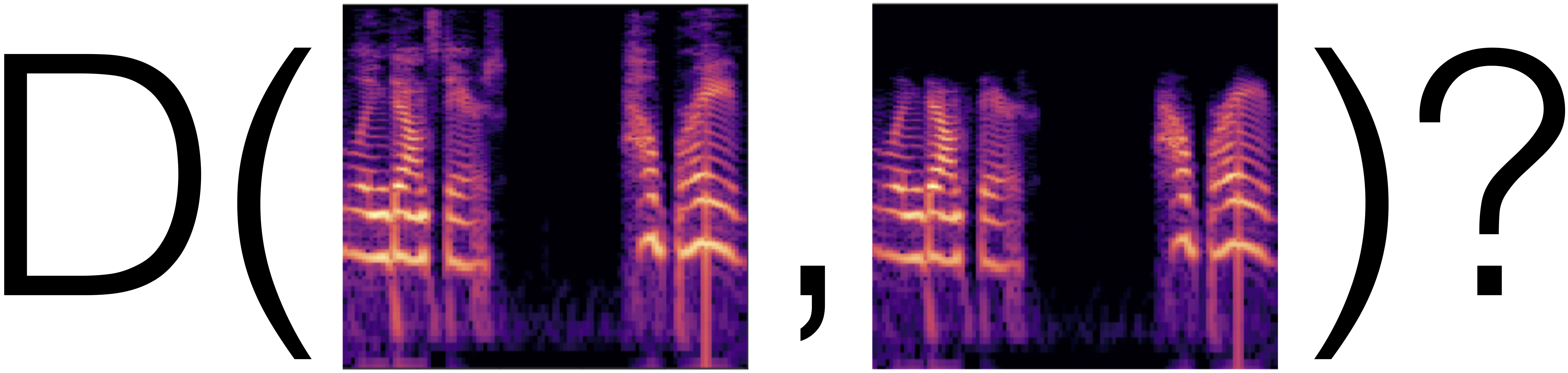

A Differentiable Perceptual Audio Metric Learned from Just Noticeable Differences

Pranay Manocha, Adam Finkelstein, Richard Zhang, Nicholas J. Bryan, Gautham J. Mysore, Zeyu Jin

Accepted at Interspeech 2020

This is a Tensorflow implementation (a pytorch implementation is here) of our audio perceptual metric. It contains (0) minimal code to run our perceptual metric (DPAM), (1) code to train the perceptual metric on our JND dataset, and (2) an example of using our perceptual metric as a loss function for speech denoising.

Also checkout our newest learned metric: CDPAM: Contrastive learning based deep perceptual audio similarity metric

Please see cd cdpam and follow the instructions in the readme. We also provide pretrained models for:

-

We make use of CDPAM for training a waveform synthesis model. We use the codebase from here. We train both single and cross-speaker models.

-

Real-time speech enhancement: We make use of CDPAM as a loss function for training an SE model. We use the codebase from here. We just augment CDPAM to their loss for training.

- At the moment, this algorithm requires using 16-bit PCM audio files to perform correctly. You can use sox to convert your file.

- The current pretrained models support sr=22050Hz. Please make sure to resample your files first before using the metric.

For ease, you can load your audio clip using librosa.load(filename,sr=22050) and then rescale to [-32768 to 32768] using np.round(audio_file.astype(np.float)*32768). See

metric_code/metric_use_simple.pyfor an example.

Installing the metric (DPAM - perceptual audio similarity metric)

pip install dpamUsing the metric is as simple as:

import dpam

loss_fn = dpam.DPAM()

wav_ref = dpam.load_audio('sample_audio/ref.wav')

wav_out = dpam.load_audio('sample_audio/2.wav')

dist = loss_fn.forward(wav_ref,wav_out)Required python libraries: Tensorflow with GPU support (>=1.14)(uses tensorflow's slim library so doesnt support tf2.0) + Scipy (>=1.1) + Numpy (>=1.14) + Tqdm (>=4.0.0). To install in your python distribution, run pip install -r requirements.txt.

Additional notes:

- Warning: Make sure your libraries (Cuda, Cudnn,...) are compatible with the TensorFlow version you're using or the code will not run.

- Required software (for resampling): SoX, FFmpeg.

- Important note: At the moment, this algorithm requires using 32-bit floating-point audio files to perform correctly. You can use sox to convert your file.

- Tested on Nvidia GeForce RTX 2080 GPU with Cuda (>=9.2) and CuDNN (>=7.3.0). CPU mode should also work with minor changes.

- The current pretrained models support sr=22050Hz. Please make sure to resample your files first before using the metric.

This version currently supports evaluating the trained metric as a loss function. This version currently does not support a simple interface to backpropogate and train the metric. For training, please clone this repo and follow the instructions below.

pip install dpamPlease look at example_pip.py as an example on how to use the function.

Follow the instructions below to: 1) Evaluate the metric on a few examples, 2) Train a metric on our dataset, 3) Use the metric to optimize a downstream task and 4) Use our pretrained speech enhancement model (trained using our loss function metric).

Running the command below takes two audio files as input and gives the perceptual distance between the files. It should return (approx)distance = 0.1929. Some GPU's are non-deterministic, and so the distance could vary in the lsb.

cd metric_code

python metric_use_simple.py --e0 ../sample_audio/ref.wav --e1 ../sample_audio/2.wav

For loading large number of files, batch processing is more efficient. Refer to at metric_code/metric_use.py for more information. In short, you need to change the dataloader function load_full_data_list(). You also need to provide the path of the trained model as an input argument.

PercepAudio - main directory

- metric_code - Section 1, training our metric on our JND dataset

- se_code - Section 2, training a speech enhancement model using our metric, trained above

- dataset - our JND framework and dataset and text files containing perceptual judgments

- pre-model - sample pre-trained models for easy reference

- sample_audio - sample audio files for comparison

- create_space - sample code for creating perturbations

- PercepAudio (main directory)

- metric_code

- main.py (train the loss function)

- metric_use.py (use the trained model as a metric)

- dataloader.py (collect and load the audio files)

- helper.py (misc helper functions)

- network_model.py (NN architecture)

- summaries folder to store the new trained model with tensorboard files

- metric_code

Go to link to download the dataset (about 23GB). After downloading the dataset, unzip the dataset into the project folder 'PerceptualAudio/dataset'. Here are the steps to be followed:

git clone https://github.com/pranaymanocha/PerceptualAudio.git

cd PerceptualAudio/dataset

unzip audio_perception.zip

More information on the JND framework can be found in the paper here. The text files in the subfolder dataset contain information about human perceptual judgments. This sets up the dataset for training the loss function.

For using a custom dataset, you need to follow the following steps:

- Follow a similar framework to obtain human perceptual judgments and store them in the dataset subdirectory. Also create a text file containing the results of all human perceptual judgments using a convention reference_audio_path \t noisy_audio_path \t human judgment(same(0)/different(1)). For an example, please see any text file in dataset subdirectory.

- Make changes to the dataloader.py function to reflect the new name/path of the folders/text file.

- Run the main.py function (after selecting the most appropriate set of parameters).

Once you train a model, you can use the trained model to infer the distances between audio recordings.

You can use one of our trained models as a metric. You can also use your own trained loss function as a metric for evaluation.

For using a custom dataset, you need to follow the following steps:

- Make sure that you have all the right requirements as specified in the requirements.txt file on the repo.

- Look at metric_use.py for more information on how to use the trained model to infer distances between audio files. In short, you need to change the dataloader function (namely function load_full_data_list()). You also need to provide the path of the trained model as an input argument. Please look at metric_use.py for full information.

“Off-the-shelf” deep network embeddings have been used as an effective training objective that have been shown to correlate well with human perceptual judgments in the vision setting, even without being explicitly trained on perceptual human judgments. We first investigate if similar trends hold in the audio setting. Hence, we first train a model on two audio datasets: Acoustic scene classification and Domestic audio tagging tasks of the DCASE 2016 Challenge. We keep the architecture of the model same to compare between different training regimes. More information on training this pretrained "off-the-shelf" model can be found in this repo.

- pretrained - pretrained "off-the-shelf" model

- linear - training linear layers over the pretrained "off-the-shelf" model

- finetune - loading the pretrained "off-the-shelf weights" but training both the linear layer and the bulk model

- scratch - training the full model from randomly initialized weights.

As an application for our loss function, we use the trained loss function to train a Speech Enhancement Model. We use the Edinburgh Datashare publicly available dataset here. We use the same dataset with any alteration except resampling the dataset at 16KHz. Direct links to download the dataset and resampling can be found here. Follow the instructions to download the SE data in the script download_sedata.sh in the above repo. In general, we follow the same directory structure.

PercepAudio (main directory)

- se_code (train a SE model using the above trained loss function)

- se_train.py (train the SE system)

- se_infer.py (Infer the SE system)

- dataset

- trainset_clean

- trainset_noisy

- valset_clean

- valset_noisy

- data_import.py (Dataloading)

- network_model.py (NN architecture)

- summaries folder to store the new trained model with tensorboard files

After you download the dataset, follow this directory structure to copy the audio files accordingly.

We make use of our metric as a loss function for training an SE model. After you have downloaded the noisy dataset above (and kept the files at the correct locations), you can start training by running the command:

python se_train.py --args.....

The trained model is stored under the summaries folder under the folder name which you specify as an argument. The model is saved as se_model_'+str(seconds)+'.ckpt' where seconds is the time in seconds since epoch so that the training can be easily monitered.

After you train a SE model, you can use the same trained model to denoise audio files. Simply run

python se_infer.py --args....

with a suitable set of arguements. The denoised files will be stored in the folder name which you specify as an argument in the script. As the SE model is big, it takes a couple of hours to run on a CPU and less than 5 minutes on a GPU.

If you use our code for research, please use the following to cite.

@inproceedings{Manocha:2020:ADP,

author = "Pranay Manocha and Adam Finkelstein and Richard Zhang and Nicholas J.

Bryan and Gautham J. Mysore and Zeyu Jin",

title = "A Differentiable Perceptual Audio Metric Learned from Just Noticeable

Differences",

booktitle = "Interspeech",

year = "2020",

month = oct

}

The source code is published under the MIT license. See LICENSE for details. In general, you can use the code for any purpose with proper attribution. If you do something interesting with the code, we'll be happy to know. Feel free to contact us. The primary contact is Pranay Manocha.